Comparing Single-modal and Multimodal Interaction in an Augmented Reality System

ISMAR-Adjunct, 2020

Zhimin Wang, Huangyue Yu, Haofei Wang, Zongji Wang and Feng Lu

Abstract

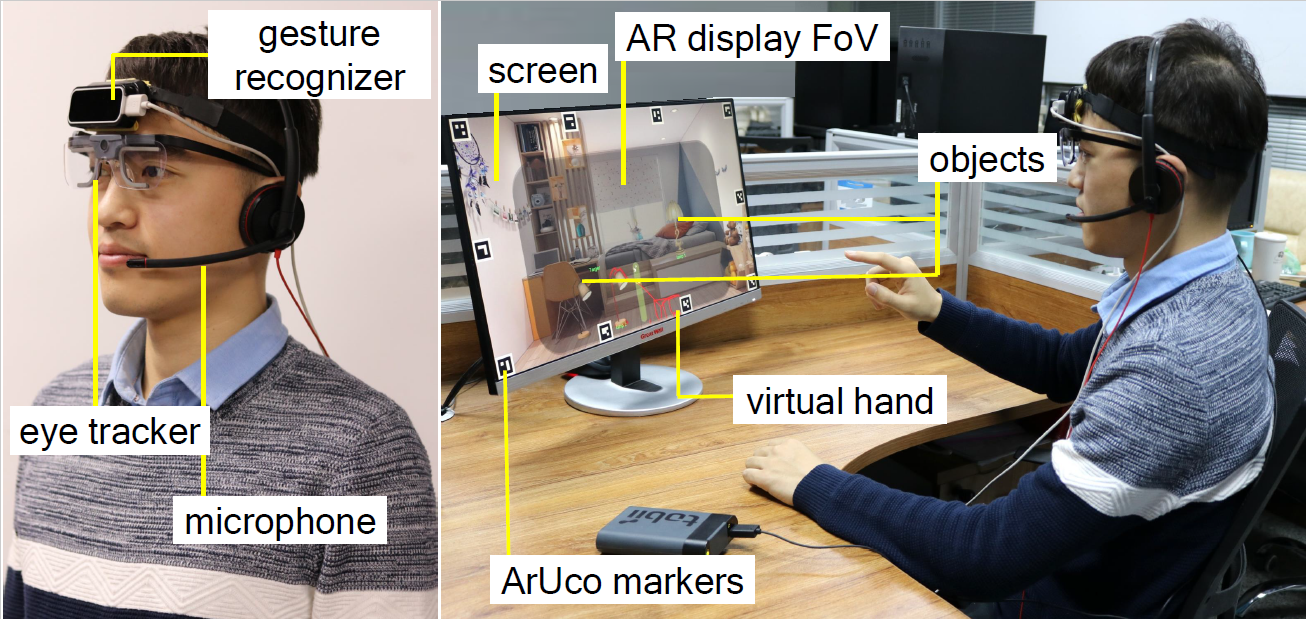

Multimodal interaction is expected to offer better user experience in Augmented Reality (AR), and thus becomes a recent research focus. However, due to the lack of hardware-level support, most existing works only combine two modalities at a time, e.g., gesture and speech. Gaze-based interaction techniques have been explored for the screen-based application, but rarely been used in AR systemsy configurable augmented reality system. In this paper, we propose a multimodal interactive system that integrates gaze, gesture and speech in a flexibly configurable augmented reality system. Our lightweight head-mounted device supports accurate gaze tracking, hand gesture recognition and speech recognition simultaneously. More importantly, the system can be easily configured into different modality combinations to study the effects of different interaction techniques. We evaluated the system in the table lamps scenario, and compared the performance of different interaction techniques. The experimental results show that the Gaze+Gesture+Speech is superior in terms of performance.

Presentation Video

Related Work

Our related work:

Gaze-Vergence-Controlled See-Through Vision in Augmented Reality

Edge-Guided Near-Eye Image Analysis for Head Mounted Displays

Interaction With Gaze, Gesture, and Speech in a Flexibly Configurable Augmented Reality System